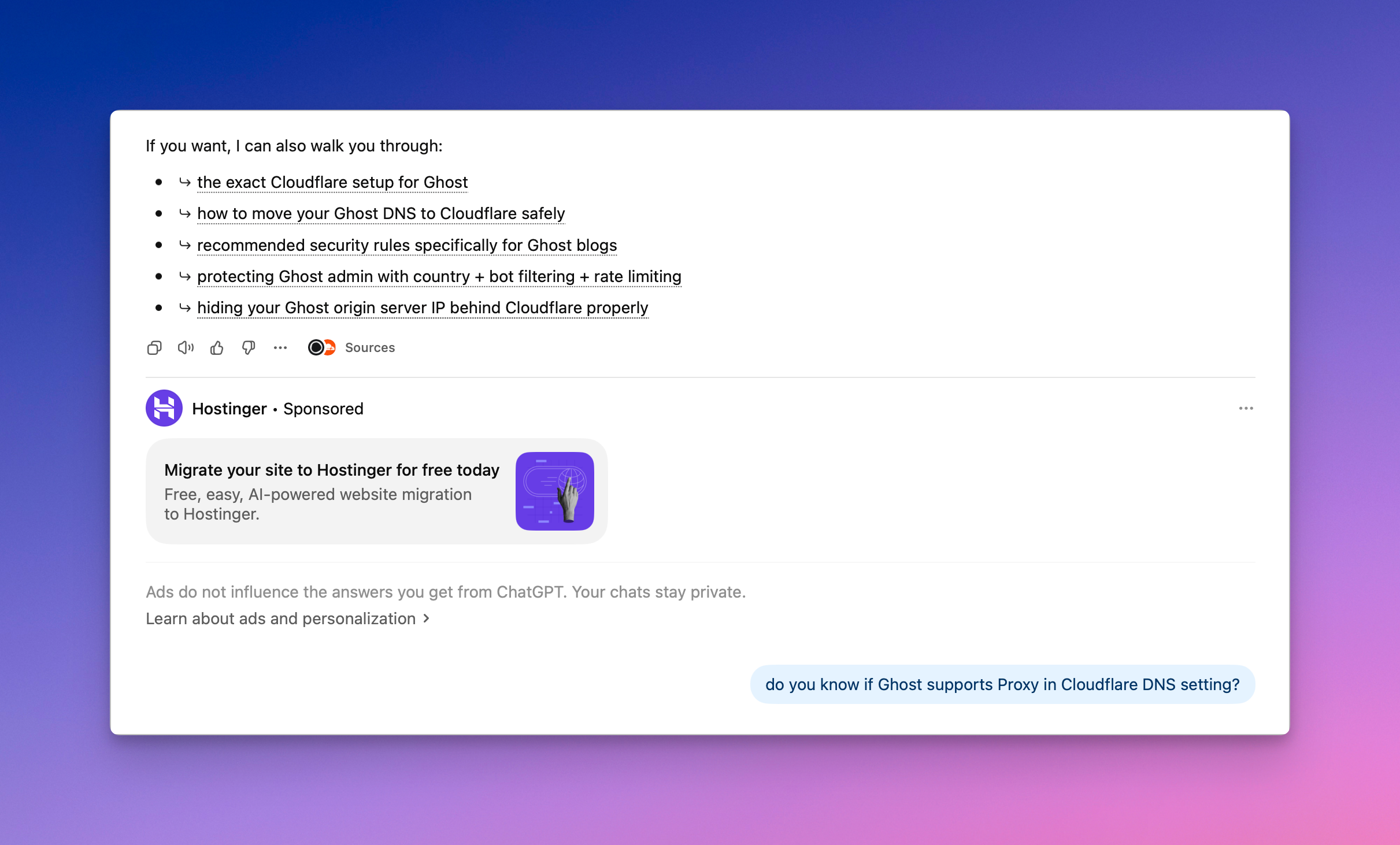

AI-Powered Title Suggestions Are Now Built Into My Micro.blog Editor

I made a small but useful improvement to my Micro.blog frontend: I added a Suggest Title option right to the title field. The prompt sent to Antropic’s cheapest model is:

Suggest a short, compelling blog post title for the following content. Reply with only the title, no quotes, no explanation.

This blog post was created to test the feature. Worked on the first try.