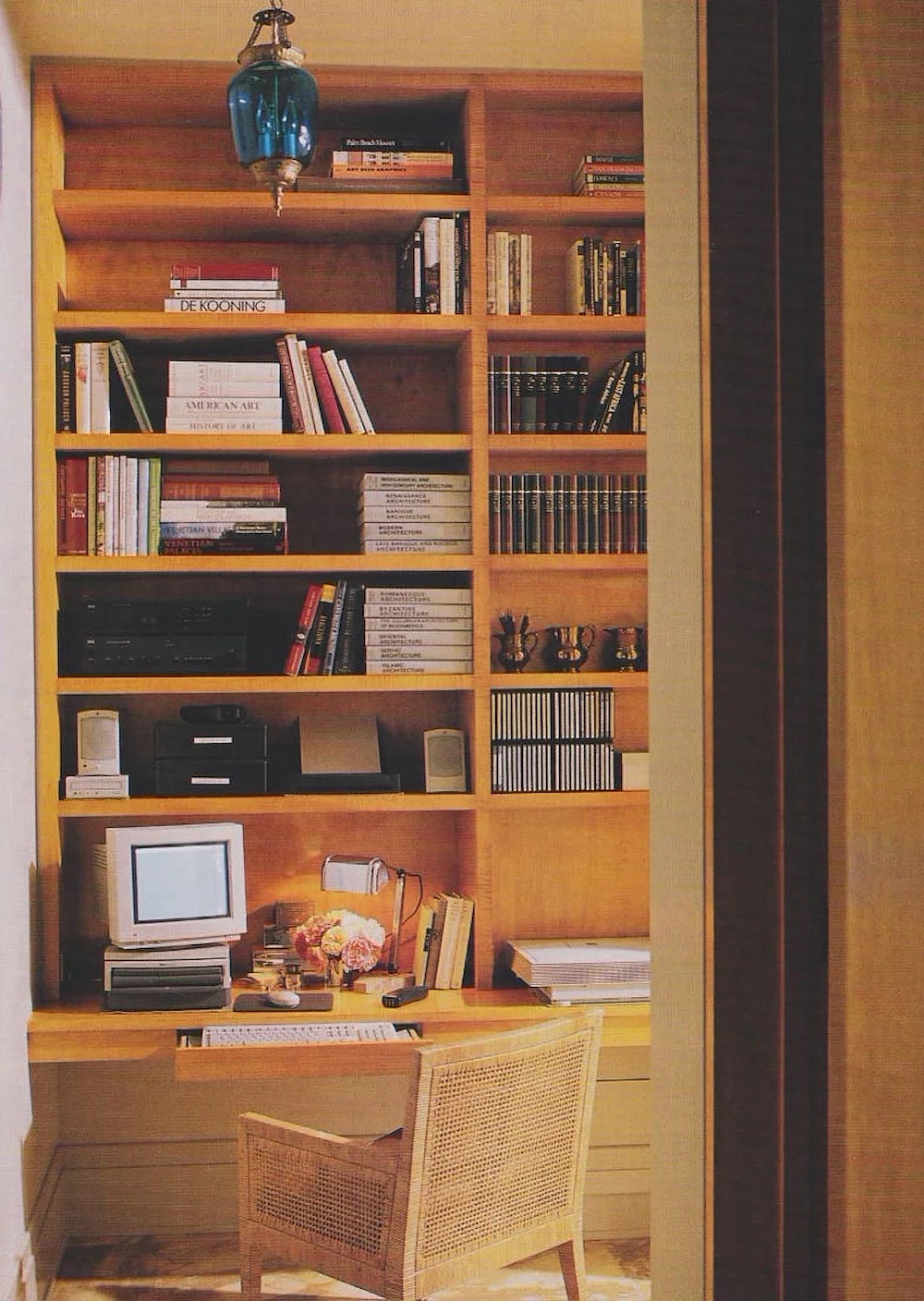

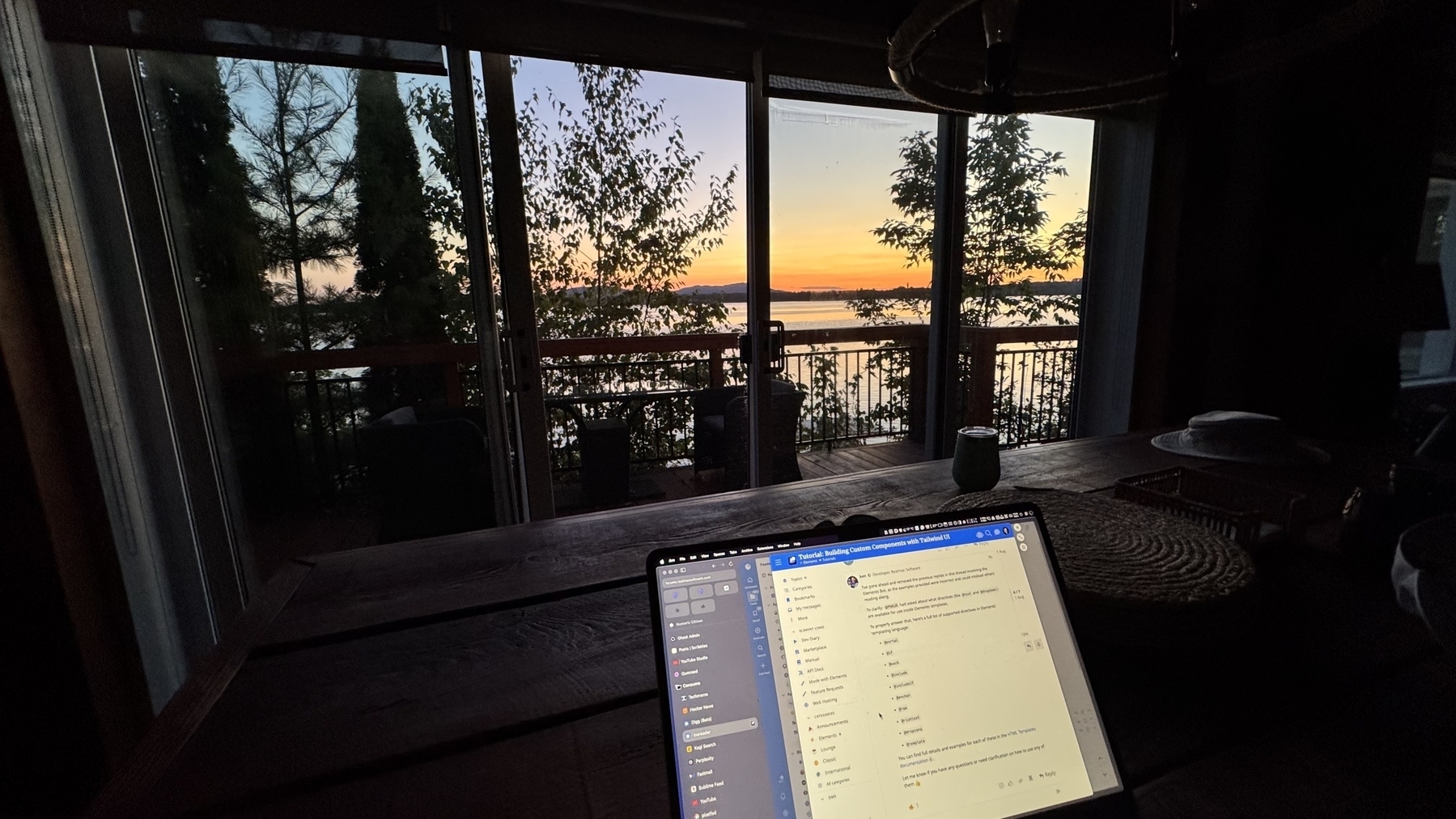

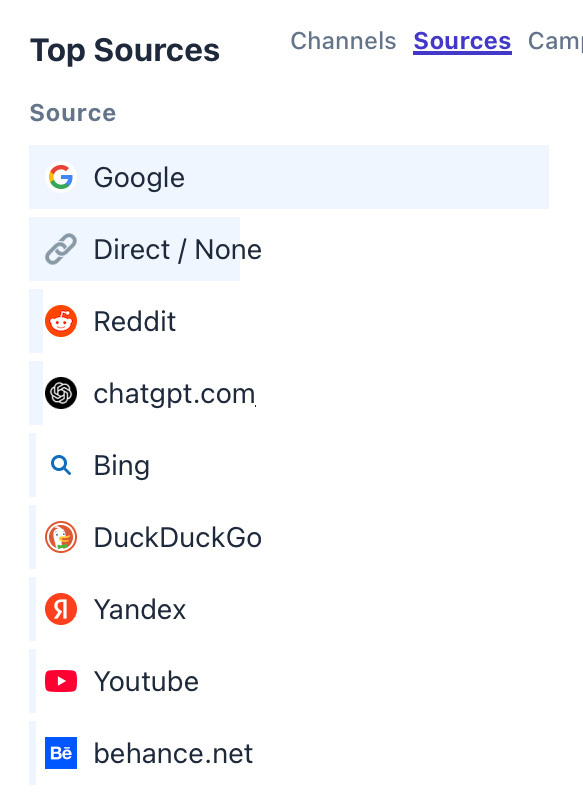

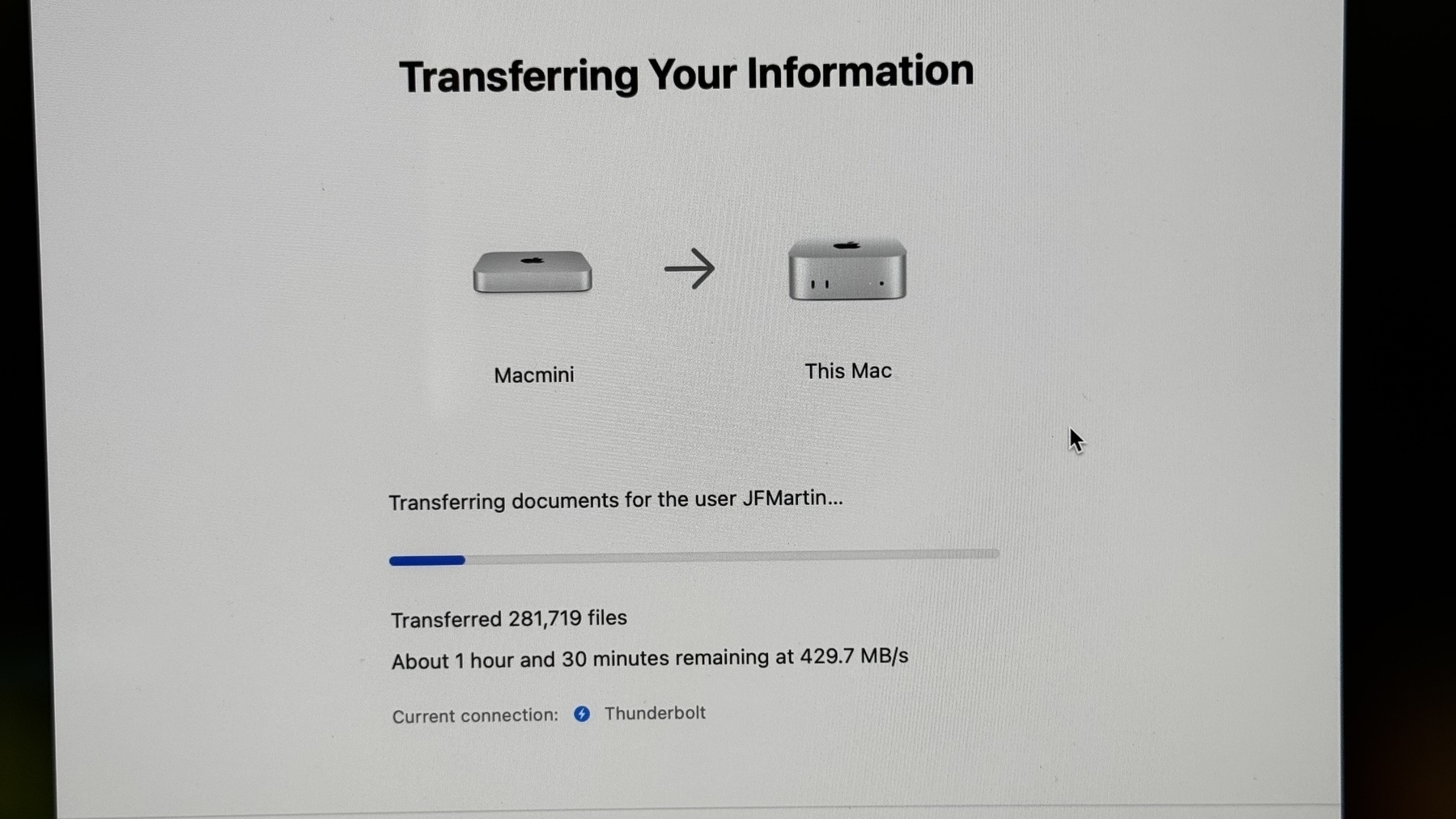

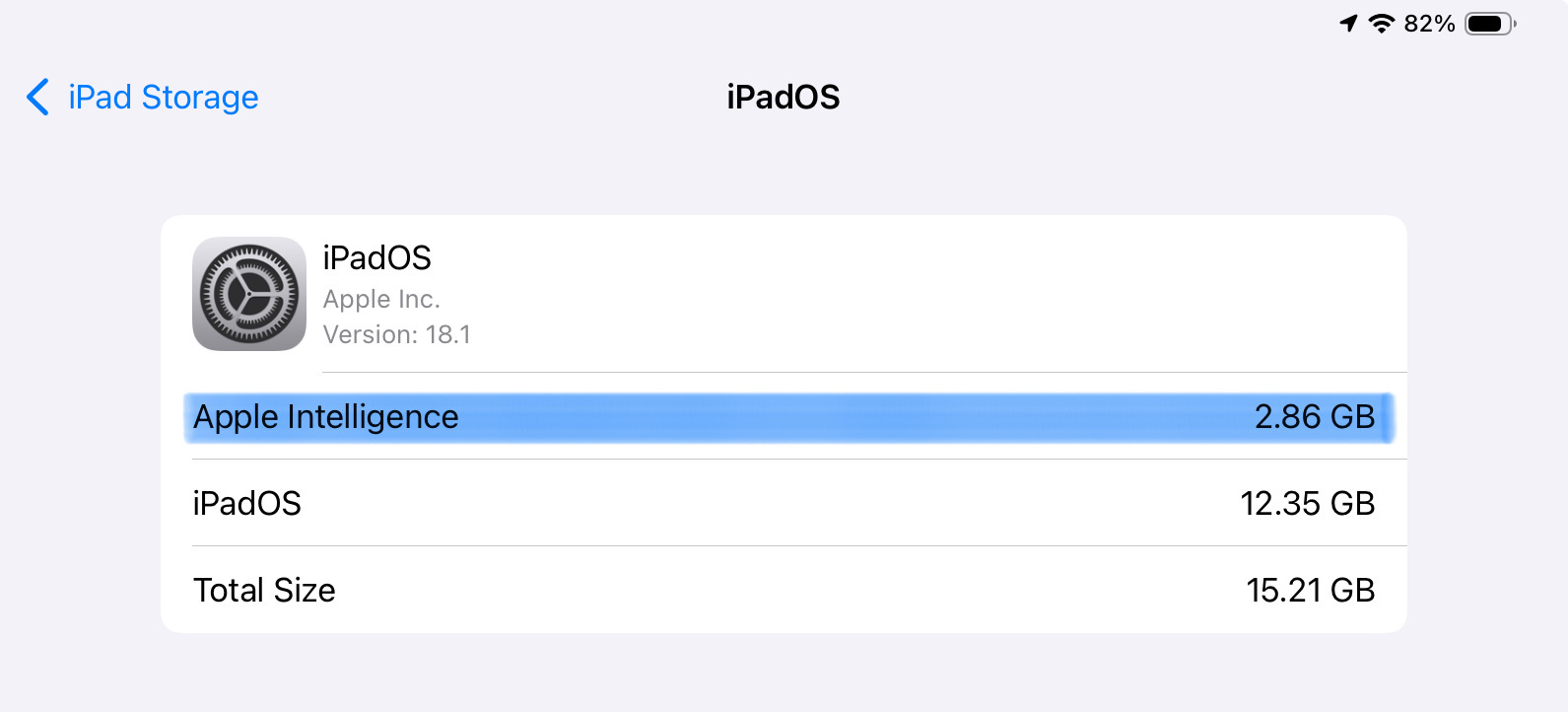

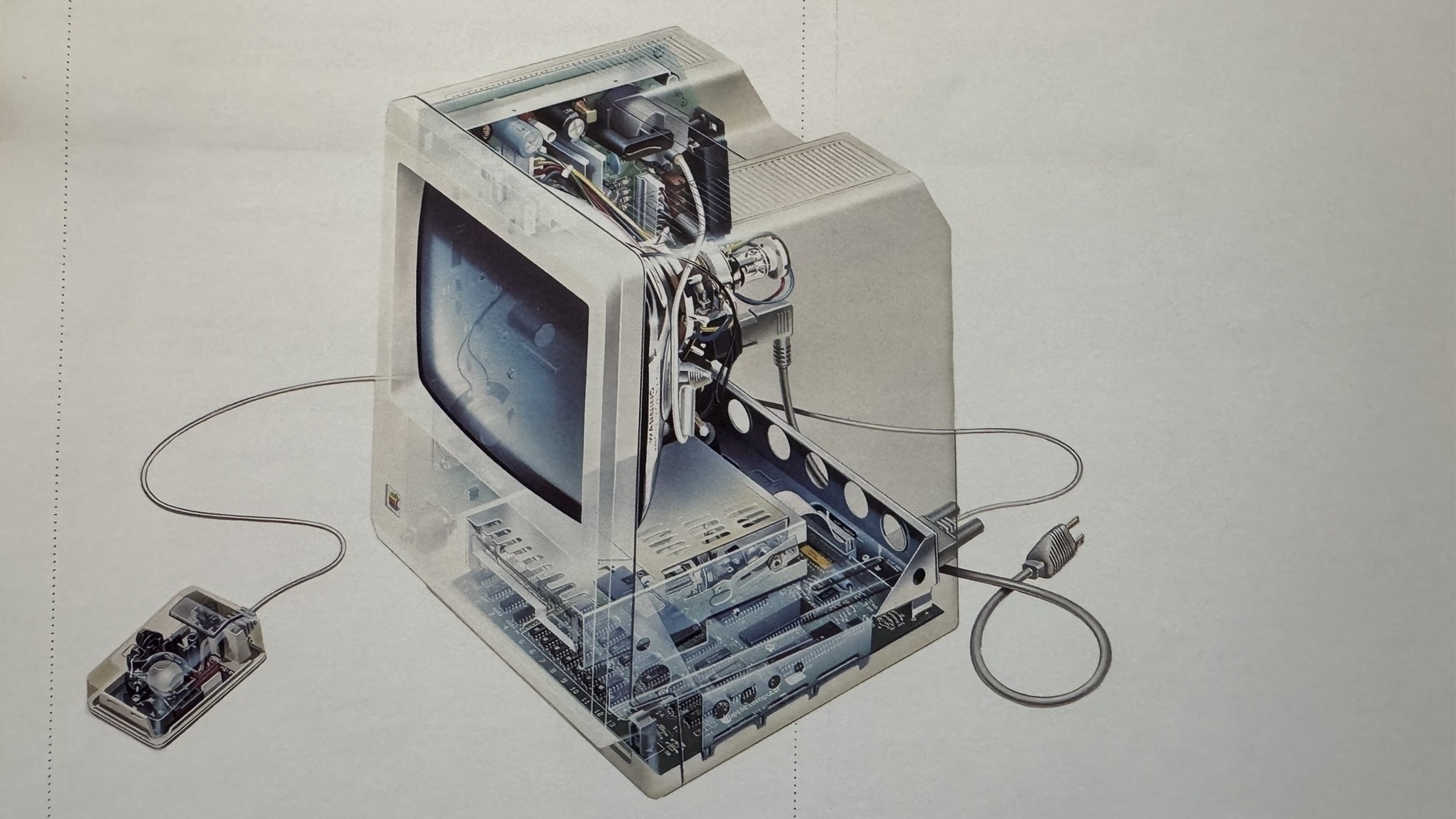

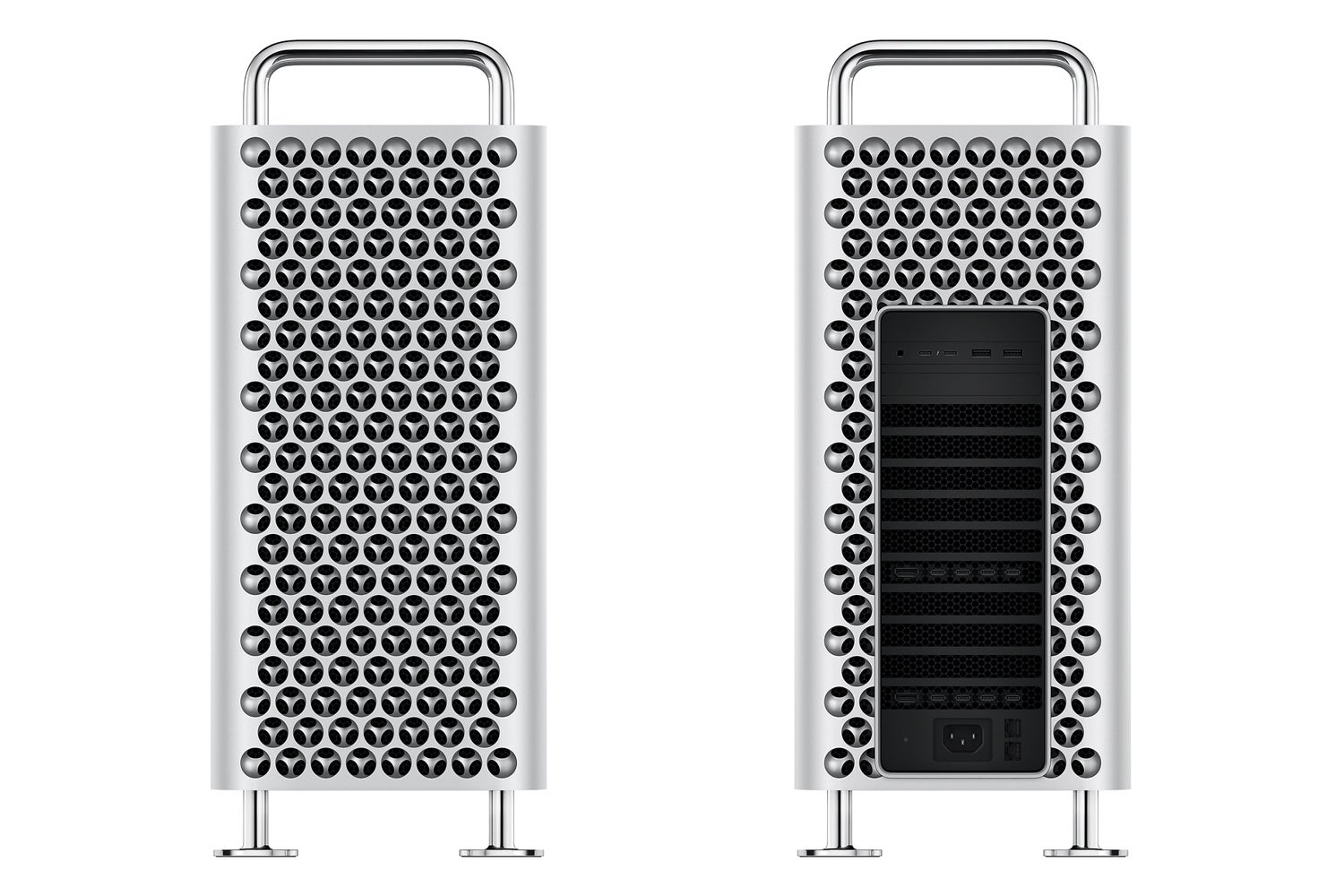

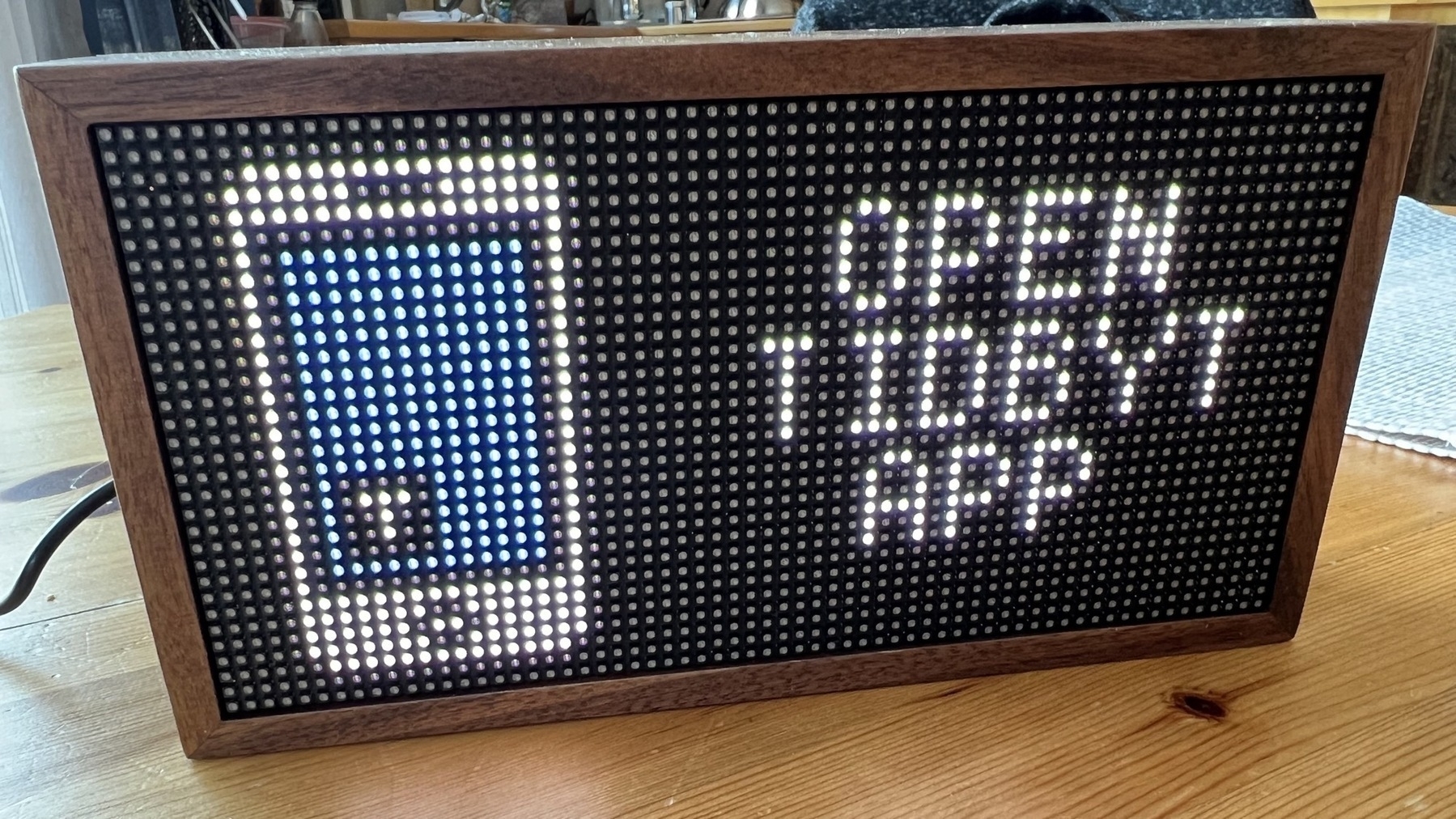

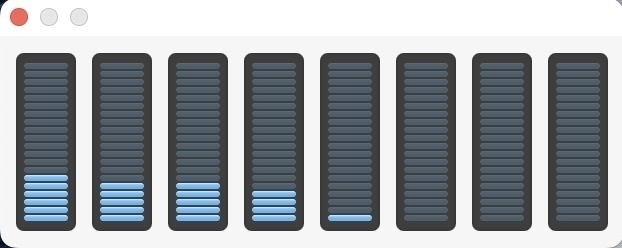

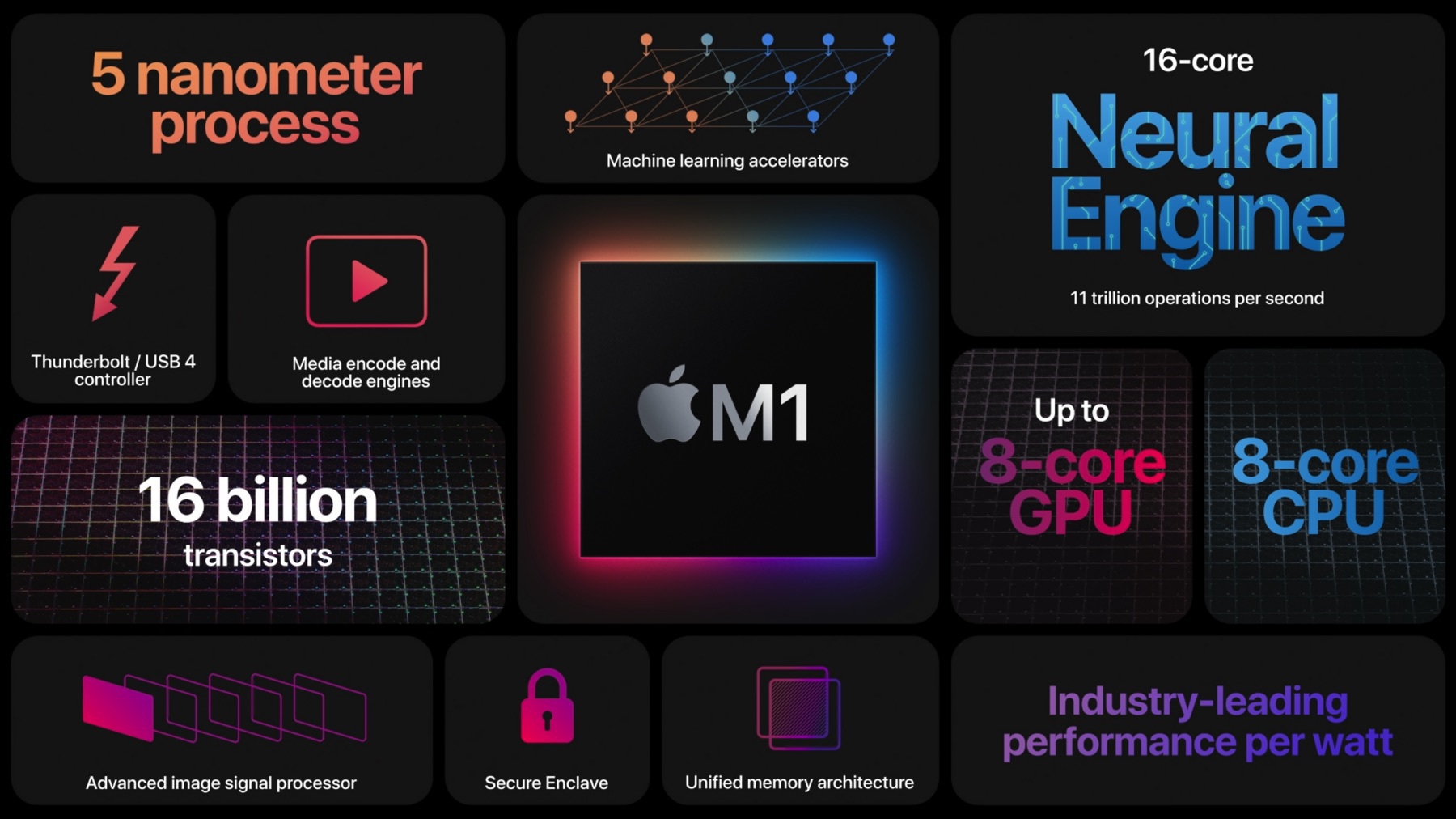

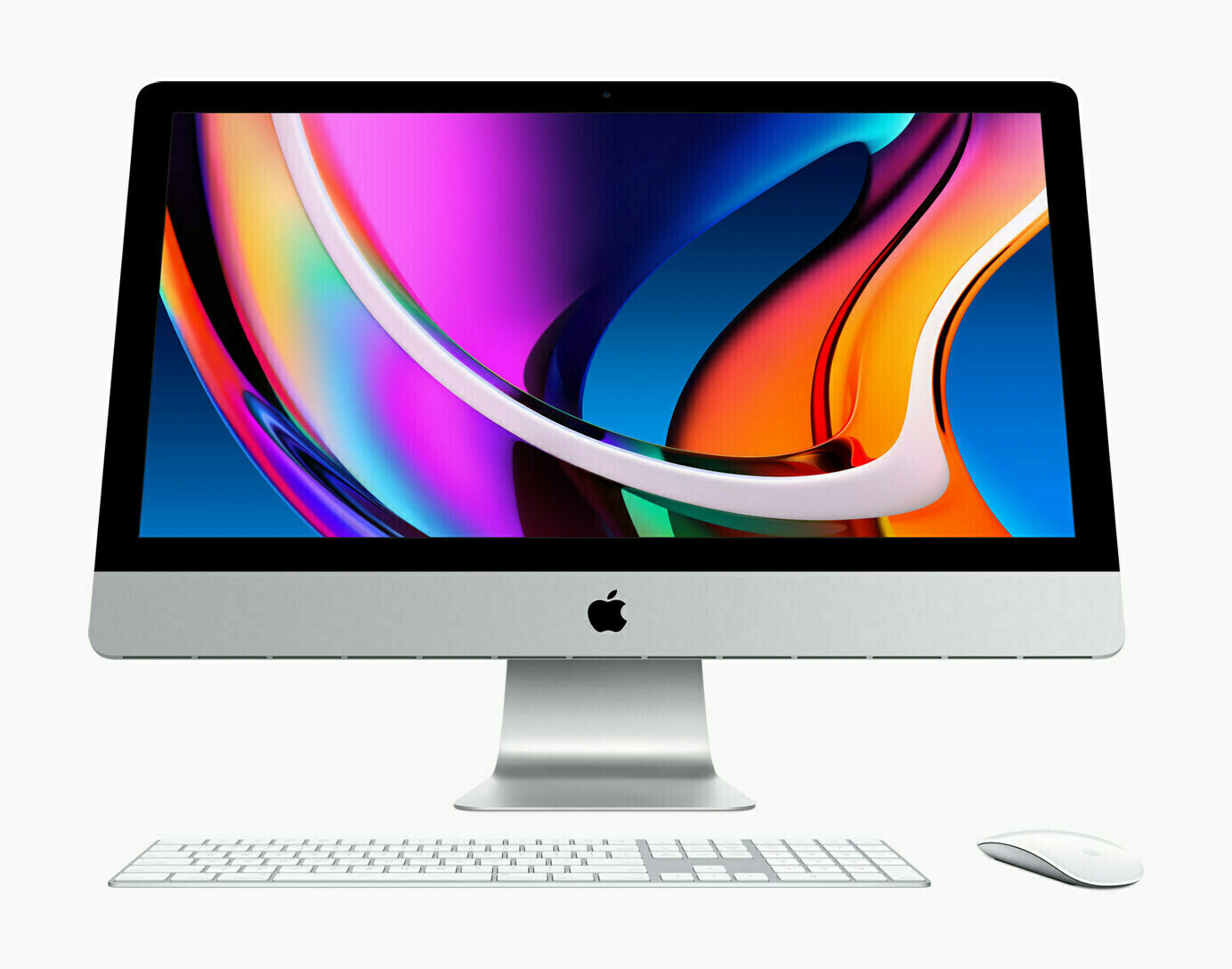

Yesterday night I installed and configured ClawdBot on my M4 Mac mini sitting on my home office desk. Now, I’m remote-controlling it with Discord, preferring it over Telegram or iMessage because Discord support in ClawdBot felt more mature. I can ask simple things and get simple answer. It’s exciting. Yet, it was more complicated than I originally thought. ClawdBot is a nerdy thing for really nerd people. More comments about ClawdBot can be found on MacStories.

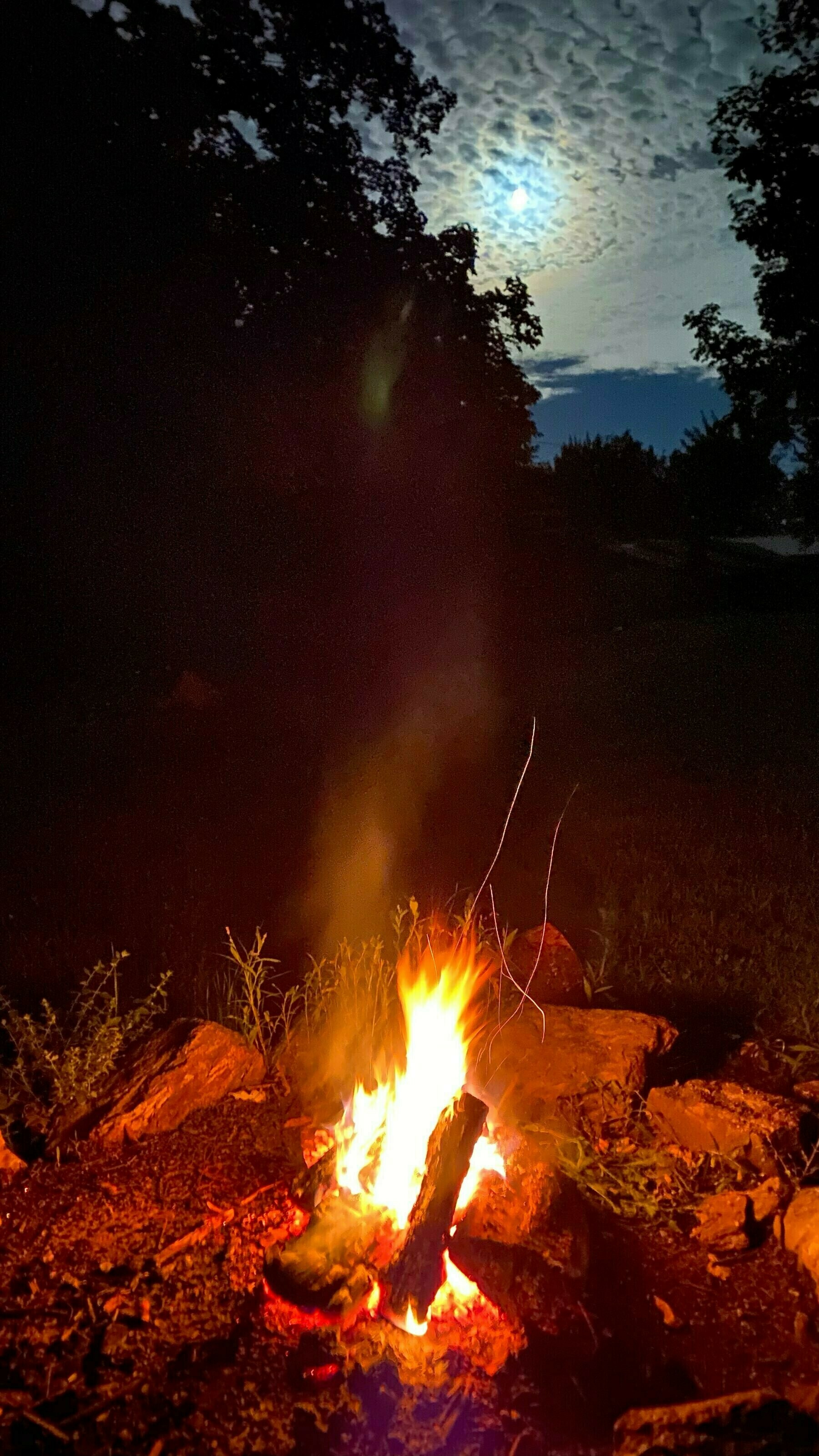

I see a lot of potential for learning and testing new things with ClawdBot. I’ll probably dedicate a lot of my spare time to it in the coming months. But for now, because I’m very close to leaving for a vacation trip to Egypt, I’ll put that aside for a few weeks.